Posted by Nodus Labs | September 14, 2020

Text as a Musical Score using InfraNodus and Orca

Music can be represented as text. The notes (C2, D4, A1) — representing the frequencies and the octaves — can be aligned together to create a musical score. The musical sequences are usually written chronologically, however, there are also other kinds of representations. For example, we have recently shown how any musical score can be represented as a network graph using InfraNodus (based on the notes’ co-occurrences in the melodies). This graph can be then used to play music back in a way that does not necessarily replicates its chronology, but stays loyal to the original structure of the musical score.

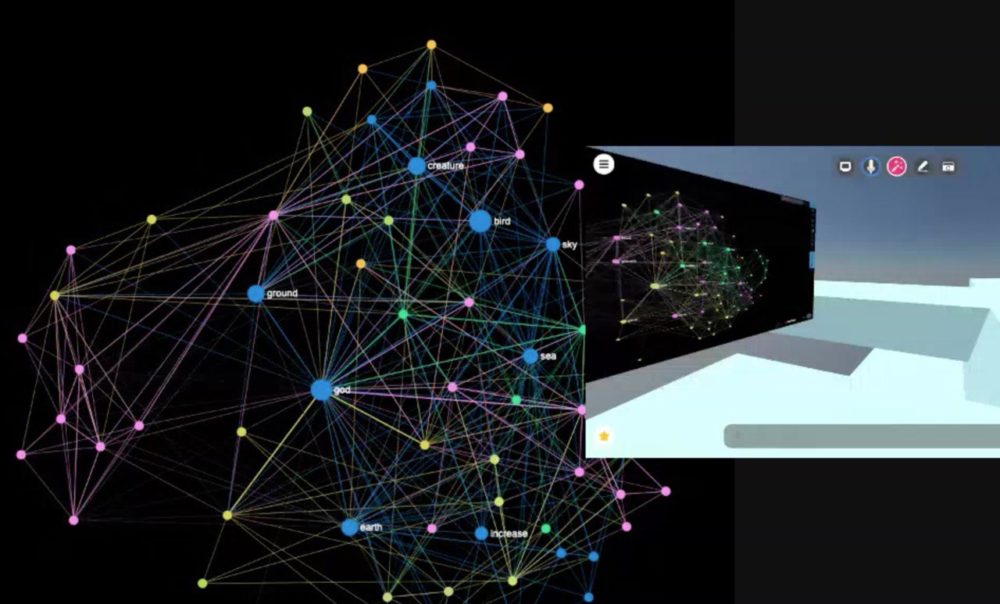

For this project we (OS/XY = Aerodynamika + NSDOS) decided to reverse the process and to see how any text, not only a musical score, could be used to play music. We used InfraNodus text network analysis and visualization tool and Orca software from hundredrabbits, which allows to make complex musical patterns using a combination of symbols. We used Mozilla Hubs VR as a space to host the live performance.

We developed a special algorithm, which maps every word to a specific note and an octave. The note’s frequency is based on the letters the word consists of, while the note’s octave is based on the length. So, for example, something like the word “music” becomes C3 while a longer word like “viscosity” becomes F6 (higher frequency higher pitch).

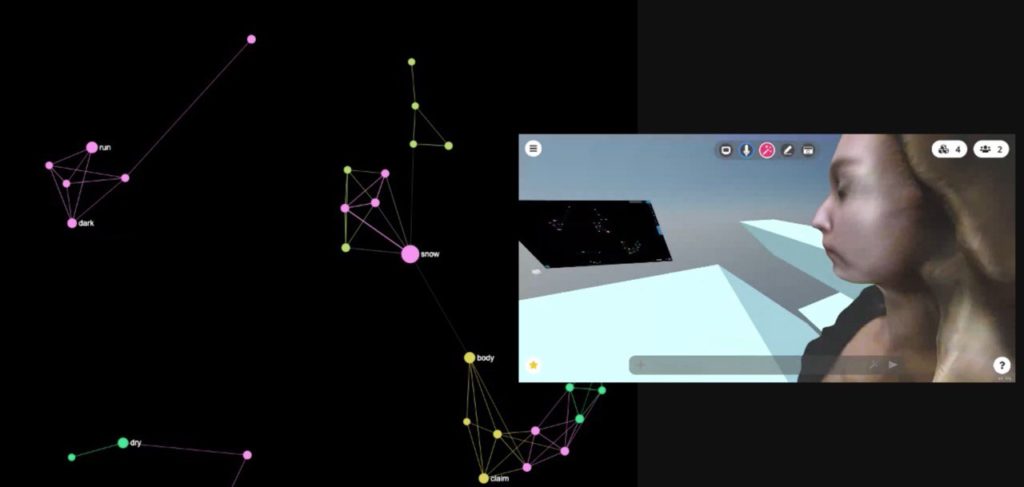

A text network visualization becomes a visual musical score represented as a graph, which can be used as an instrument that plays music in a way that reflects the text’s original structure.

What we can then do is to use the words in the graph to play MIDI notes with an external or internal synth (GliderVerb, Usine, or Teenage Engineering’s OPZ or OP-1). This approach is similar to what we did when we used the network graph to represent and visualize the music’s notation structure, however, in this case, we actually used the words instead of the notes.

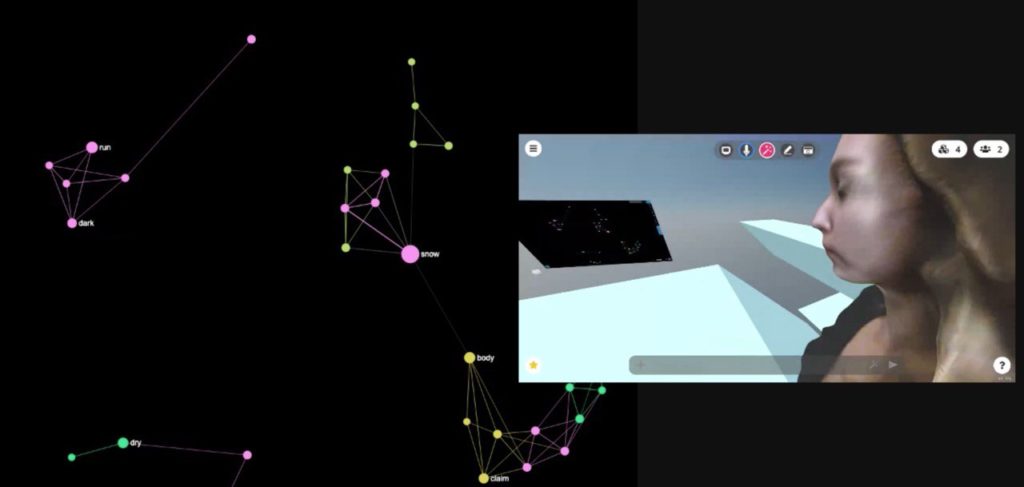

One part of OS/XY collective, @Aerodynamika, was playing the “texts” that were created live from Berlin using InfraNodus.

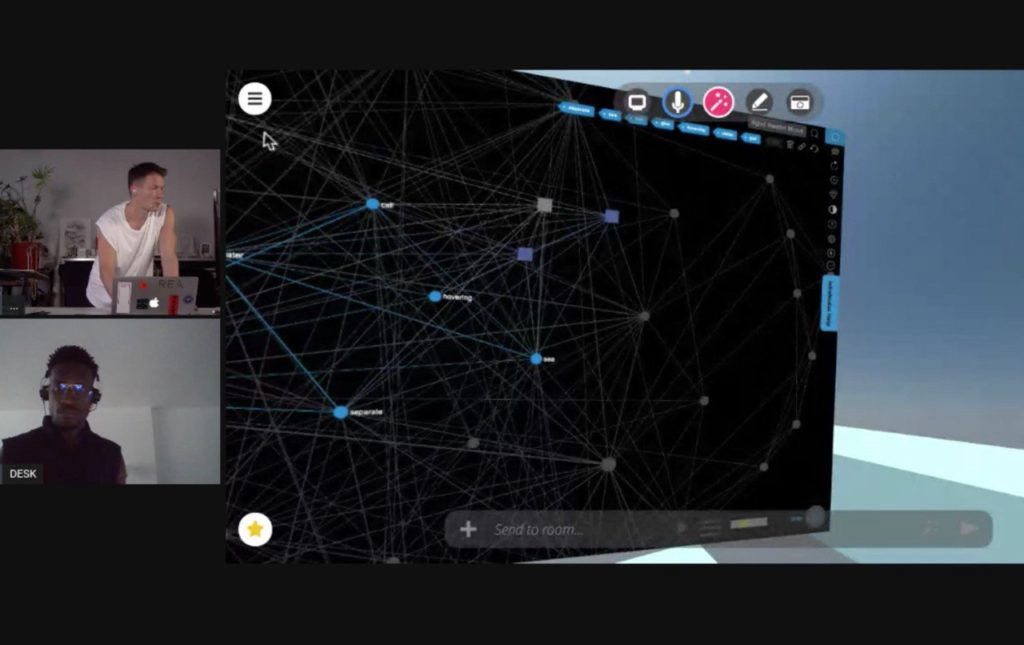

The other part of the collective, Kirikoo Des aka NSDOS, was playing from Paris primarily using ORCA software by HundredRabbits. Orca is a system that lets you create a musical score using a textual pattern.

We then combined the both systems together to feed the output sound into Mozilla Hubs’ VR space, so that we could easily sync. Making the live performance accessible to the external audience and also allowing us to have a real-time sync link over the internet (VR applications are quite demanding in terms of real-time applications, so it’s a great way to sync online).

Another positive aspect of introducing the VR was that we had a common shared space to exchange visual imagery and ideas, which we could then use to bounce off new textual scores into InfraNodus, which would then be converted into music.

On the other side, Koo Des, while being in the VR world was using the motion tracking software to control Orca using the movements. This let us create a direct feedback loop between all the elements using both physical and virtual spaces.

Also check out how we can visualize a musical score as a network of notes: