Posted by Nodus Labs | January 10, 2020

Network Graph as a Musical Instrument

Music can be visualized as a graph. While there are many ways to do that, one of the possibilities is to represent the notes (and octaves) as the nodes and their co-occurrences as the connections between them. This representation allows us to reveal patterns in our composition and to use the powerful graph theory algorithms to uncover interesting insights about the structure of the music we’re playing.

Below we present a case study performed using InfraNodus MIDI app in the context of Chaos Computer Club (CCC / 36C3) conference in Leipzig, Germany. It was presented by OS/X.Y — Aerodynamika and NSDOS — live at the closing event of CCC (special thanks to DJ Spock for the invitation).

The Basic Setup: Orca, Teenage Engineering, MIDI and InfraNodus

Our basic setup was the following:

- Orca software by Hundredrabbits (open-source), used as a composition device and a MIDI controller.

- InfraNodus text network visualization software by Nodus Labs (open-source), used as a composition device and a MIDI controller

- Teenage Engineering‘s two portable OP-Z devices used as the synths

Orca, operated by Koo Des / NSDOS, was creating a self-replicating code, based on the game of life, which was producing the sounds in OP-Z as well as sending another MIDI signal via USB to InfraNodus software.

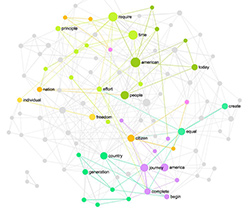

InfraNodus would then visualize the notes received as a graph. Every note was a sample on OP-Z but their complex structure and composition were produced live both by NSDOS and Orca (as a human-machine duo). For example, note C on octave 2 and channel 2 was a particular sound on OP-Z and would be represented as a node #C22 in InfraNodus. Once 16 note-nodes were added, they would be visualized as a graph. The note’s co-occurrences produced the connections (edges) in the graph. For example, #C22 played before #D14 would produce an edge from node C22 to node D14.

These nodes, once added into the graph, would produce a MIDI sequence in InfraNodus, which was then played in another OP-Z device with a different sound device. Aerodynamika would control the InfraNodus interface to produce more sounds: for example, clicking a node produced the note it represents, while selecting an automatically identified cluster produced a chord sequence. Special modes in InfraNodus also allow one to sync in the BPM and play a more rhythmical structure or to click a node and see all the graphs where the node-note appears and switch into them, accessing the new musical patterns, previously unplayed in the same context.

The Musical Network Graph as a Composition Device

The most interesting part for us was to conceive of a network graph as a diagram that traces the patterns produced in music. And to then apply various graph theory algorithms to approach the music-making process differently, creating the sounds that have not been played before.

For example, InfraNodus has a feature that identifies how connected a graph is. If it’s too connected it automatically produced the new, previously unplayed notes, which would expand the current range of music and influence both the musical soundscape and the original device, which is sending information to it. On the other side, if the graph is too dispersed, it would identify the least connected clusters and try to connect them, which created a new combination in the soundscape but based on the musical notes we’ve already heard before.

This way a network graph can become a powerful composition device that can be used to play music differently, exploring the new patterns and combinations of sound.